GNO-SYS is founded on the principle of “operationalizing” geospatial technology. Our objective is to simplify geospatial infrastructure for space companies and others to allow engineers, scientists, and technicians to collaboratively create products and solutions in a reliable, repeatable, and robust system.

The pace of designing, building, and launching a remote sensing satellite into space is fast and accelerating. And while getting a satellite into space is an incredible achievement, the hardware by itself does not create a product. An often-overlooked or underestimated component of a space-based sensor is a robust and scalable downstream processing system which enables the use and exploitation of the signals recorded with the satellite sensor. If you are on the receiving end of a satellite raw-data downlink you’re probably thinking about:

To address all these important considerations companies need to focus on “operationalization” of the geospatial technology stack. This is frequently a challenge for companies that launch spacecraft and operate satellite constellations.

The same considerations are true for scaling airborne or terrestrial sensors such as LiDAR mobile mappers.

“Operationalization”

Taking a system from a functional prototype and scaling it up into a reliable, repeatable, and robust system to consistently create and deliver geospatial data products.

Most companies have various stakeholders within a single organization that are interested in creating the data products. In other words, there are many users and departments who need to get their hands on the data. These teams may not always agree on the types of products to be built, or the relative importance of each. The science team, for example, is eager to get access to the data to develop new algorithms and models to build capabilities. They are worried about:

The development team may want to access the data to create new API’s and applications. They are asking questions like:

Without a common architecture it’s difficult to create a framework for the company to work together as a cohesive team. But with a common, enhanced architecture, all stakeholders and users will get the right access and support they need to build their products and solutions.

And that’s exactly what we specialize in!

GNO-SYS develops customized high-end software architectures for the optimal operationalization of geospatial data for aerospace and other companies. We do this with the following mindset

Each company we work with is unique, yet in our experience, there is a common set of core infrastructure components in all systems.

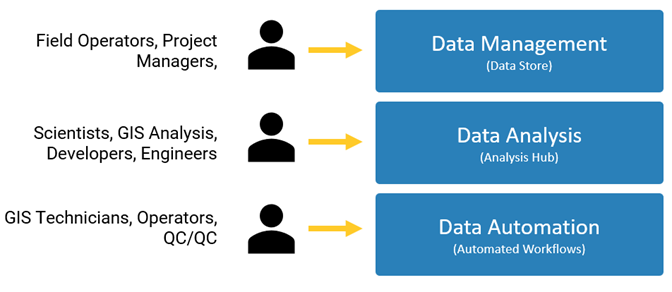

The combination of these three components create the framework for all things downstream:

These three components form the foundation for a scalable, repeatable, and robust geospatial data management and processing system. With this foundation in place we can begin to automate and customize the workflows to suit science teams, development teams, and management.

Our goal is to have an end-to-end system for collaboration and creation of data products. For a space company it may be scenario like this:

That’s what we call “Operationalization”.