Lidar has become a cornerstone technology for many applications, from topographic mapping and urban planning to digital terrain models and environmental monitoring. As lidar datasets grow in complexity and volume, the need for efficient and customized processing workflows becomes more critical.

In this blog, we explore how companies can optimize lidar processing through tailored workflows, which can help maximize efficiency and accuracy.

Because they may lack the necessary expertise, they face the dilemma of either learning Python programming or hiring external scriptwriters, which may not yield optimal solutions.

Moreover, many companies struggle with data organization, relying on different storage solutions like hard drives or network locations. This often results in chaotic data management and hinders collaboration between departments.

Developing customized lidar processing workflows tailored to specific needs is crucial for addressing challenges, and companies can achieve this effectively by scripting, automating, and designing new workflows using languages like Python or MATLAB. This allows for efficient data processing and analysis. Additionally, utilizing cloud-based processing systems provides scalable computing resources and specialized tools for handling large datasets and executing complex algorithms without extensive infrastructure. Cloud environments also facilitate collaboration and accessibility, enabling stakeholders to contribute from anywhere. Combining customized workflows with cloud-based processing enhances efficiency, accuracy, and adaptability to project requirements.

At GNO-SYS, when we work with a client, we begin by clearly defining the objectives of the lidar processing workflow. It is important to know the end goals, whether terrain modeling, feature extraction, or change detection. Our team at GNO-SYS relies on innovative solutions, such as our cloud-based and scalable processing system, GNOde, which streamlines the entire workflow. We have taken the time to build and automate the required steps in a cloud environment. The cloud and GNOde enable the seamless assembly of a complete processing workflow for lidar data from start to finish.

Automating lidar data processing entails establishing a systematic workflow to execute tasks like importing raw scans, filtering noise, and classifying points without manual intervention. This involves defining processing steps, developing scripts or utilizing software tools, and monitoring performance for accuracy and reliability. Once optimized, the automated pipeline efficiently handles large volumes of data, reducing processing time and human effort while ensuring consistent and reliable results. This scalable approach enhances productivity for tasks like land use mapping, urban planning, and infrastructure monitoring.

The workflows at GNO-SYS work like this: The process initiates when data is dropped into an S3 bucket, triggering the workflow. Initially, the zip file is decompressed, and the data is extracted into the cloud storage in an output folder. The subsequent processes run in parallel, which enhances efficiency. For instance, tasks like point cloud classification and the addition of raw data to STAC happen simultaneously since they are independent processes. Further down the workflow, another set of parallel tasks occurs, contributing to overall processing efficiency.

Workflows can also be configured to initiate processes and automatically notify stakeholders upon completion. Features such as pausing processes for approval or quality control checks can be incorporated to add human interaction where necessary. GNOde, our cloud-based data management system, is a repository for these tasks and jobs.

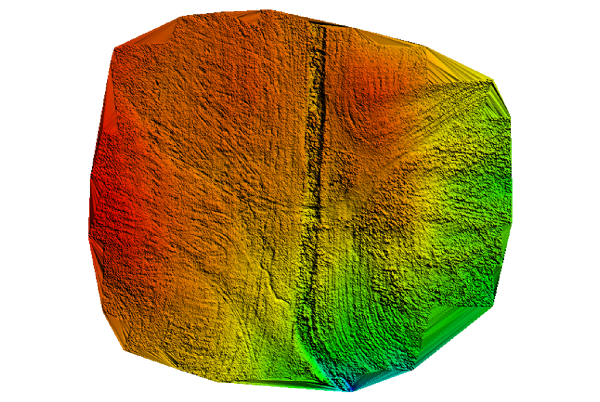

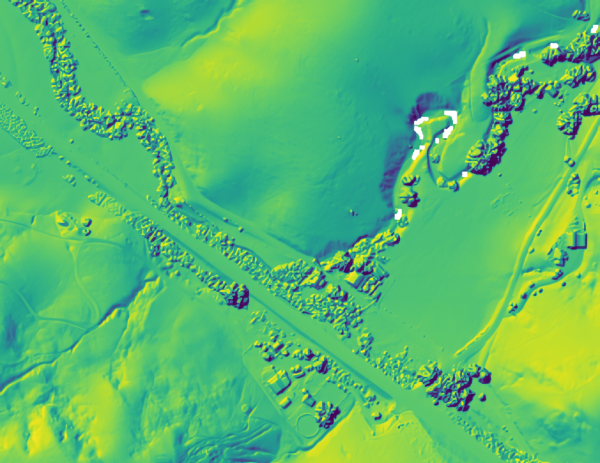

Examples of such tasks include common operations like “create DSM“, “create hillshade” or “void filling.” The objective is to compile a comprehensive library of these smaller tasks and allow customers to construct or customize workflows using GNOde. This flexibility enables customers to efficiently manage and process their data according to their specific requirements.

The cloud offers batch processing capabilities and essential utilities owned by the platform. Customers can leverage these utilities for their own processes or customize and create them specifically for them. Owned batch processes provide flexibility, enabling customization with tools not readily available. A library of these utilities and substep functions also exists, facilitating modular workflows. Workflows can call other workflows, allowing projects to be broken down into manageable components. This modular approach enables the creation of simple, reusable workflows tailored to different customers’ needs.

Optimizing workflows offers several advantages. Firstly, repeatability and time-saving are key benefits. With a well-defined setup, workflows consistently perform the same tasks, saving time and ensuring reliability. Secondly, workflows are user-friendly due to their visual nature. Users can easily understand and manipulate workflow components, enhancing ease of use. Additionally, workflows empower individuals without coding experience to build processes. By leveraging pre-established tools and Python coding, users can focus on learning to utilize tools within the workflow rather than mastering coding techniques.

Our solution streamlines this process by centralizing results in an online data store, facilitating easy search, download, and sharing capabilities. This ensures efficient data management and promotes seamless collaboration across teams.

We collaborated with a client utilizing the STAC/GNOde catalog for a recent project. This company specializes in terrestrial lidar scanning within urban environments, and they were looking for significant scalability for large projects. They focused on ease of use, aiming to reduce the person-hours required for processing and management. Their primary goal was to streamline their processing workflow, access the results efficiently, and share the processed data with users across multiple geographic locations.

With the workflow we devised, they could drop their zip files into the system and retrieve their processed files later without the need to write any code. This workflow enabled them to focus solely on the output without concerning themselves with the underlying processing mechanisms.

Their objective was to process lidar data and ensure the accessibility of results to other team members without requiring them to understand the intricacies of the processing pipeline. By granting access to the catalog, team members could utilize the processed data for their work without being burdened by technical details and centralizing all data within a STAC catalog significantly enhanced data organization, addressing a common challenge that aerospace and geospatial companies face.

The integration of machine learning capabilities will allow for enhanced data classification, such as identifying different types of buildings, leveraging spatial data to augment this process. Our expertise in geospatial data processing allows us to develop tailored workflows based on specific requirements. By understanding the desired inputs and outputs, we can design workflows that optimize efficiency and functionality, allowing our clients to focus on their objectives while we manage the technical aspects behind the scenes.

In conclusion, optimizing lidar processing with customized workflows is essential for aerospace companies to reach the full potential of this technology. As lidar continues to play a crucial role in aerospace applications, mastering customized processing workflows will be key to staying ahead in the industry. Engaging a specialized geospatial company like ours ensures access to a blend of geospatial and software development proficiency. This unique synergy allows for the seamless integration of solutions tailored precisely to your requirements while safeguarding your intellectual property rights. By opting for our services, you avoid the expense of expensive software licenses. Our holistic approach tackles your immediate needs and uncovers efficiencies that might otherwise go unnoticed.